The goal of this project is to investigate whether image representations based on local invariant features, and document analysis algorithms such as probabilistic latent semantic analysis, can be successfully adapted and combined for the specific problem of scene categorisation. More precisely, our aim is to distinguish between indoor/outdoor or city/landscape images, as well as (in a later stage) more diverse scene categories. This is interesting in its own right in the context of image retrieval or automatic image annotation, and also helps to provide context information to guide other processes such as object recognition or categorisation.

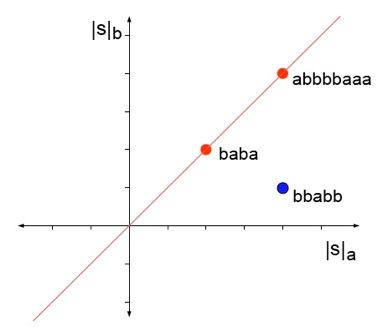

So far, the intuitive analogy between local invariant features in an image and words in a text document has only been explored at the level of object rather than scene categories. Moreover, it has mostly been limited to a bags-of-keywords representation. Introducing visual equivalents for more evolved text retrieval methods to deal with word stemming, spatial relations between words, synonyms and polysemy is the prime research objective of this project, as well as studying the statistics of the extracted local features to determine to which degree the analogy between local visual features and words really holds in the context of scene classification, or how the local features based description needs to be adapted to make it hold. |

Semantic information recognition and extraction is the major enabler for next generation information retrieval and natural language processing. Yet it is currently only successful in small domains of limited scope. We claim that to move beyond this restriction requires one: (1) to perform integrated semantic extraction incorporating a probabilistic representation of semantic content, and (2) to better employ the broader semantic resources now coming on-line. This project will explore both fundamental research and large scale applications, using the public domain Wikipedia as a driver and a resource. Research will explore the integration of semantic information into the language processing chain. Applications will employ this in broad spectrum named-entity recognition, and in cross-lingual information retrieval using the rich but incomplete data available fron the Wikipedia. Three PASCAL sites will contribute pre-existing software, theory, and skills to the range of tasks involved.

The purpose of the project is to explore a new family of grammatical inference algorithms, based on the use of string kernels. These algorithms are capable of efficiently learning some languages that are context sensitive, including many linguistically interesting examples of mildly context sensitive languages. The project started on November 1st 2005, and finished at the end of October 2006. It is a collaboration between Royal Holloway and EURISE.

|